[continued from part I]

Accessibility as a value system

Accessibility has always been part of the design conversation during product development on every MSFT team this blogger worked on. One could cynically attribute this to commercial incentives originating from US government requirement for software compliance with Americans with Disabilities Act. Federal sales are a massive source of Windows revenue and failing a core requirement that would keep the operating system out of that lucrative market is unthinkable. But the commitment to accessibility extended beyond the operating system division. Online services under the MSN umbrella arguably had even greater focus on inclusiveness and making sure all of the web properties would be usable for customers with disabilities. As with all other aspects of software engineering, individual bugs and oversights could happen, but you could count on every team having a program manager with accessibility in their portfolio, responsible for championing these considerations during development.

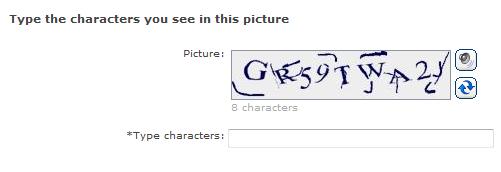

Luckily it was not particularly difficult to get accessibility right either, at least when designing websites. By the early 2000s, standardization efforts around core web technologies had already laid the foundations with features specifically designed for accessibility. For example, HTML images have an alternative text or alt-text attribute describing that image in words. In situations when users can not see images, a screen-reader software working in conjunction with the web browser could instead speak those words aloud. World Web Consortium had already published guidelines with hints like this— include meaningful alternative text with every image— to educate web developers. MSFT itself had additional internal guidelines for accessibility. For teams operating in the brave new world of “online services” (as distinct from the soon-to-be-antiquated rich-client or shrink-wrap models of delivering software for local installation) accessibility was essentially a solved problem, much like the problem of internationalization or translating software into multiple languages which used to beguile many a software project until ground rules were worked out. As long as you followed certain guidelines— obvious one being to not hard-code English language text intended for users in your code— your software can be easily translated for virtually any market without changing the code. In the same spirit, as long as you followed specific guidelines around designing a website, browsers and screen readers will take care of the rest and make your service accessible to all customers. Unless that is, you went out of your way to introduce a feature that is inaccessible by design— such as visual CAPTCHAs.

Take #2: audio CAPTCHAs

To the extent CAPTCHAs are difficult enough to stop “offensive” software working on behalf of spammers, they also frustrate “honest” software that exists to assist users with disabilities navigate the user interface. Strict interpretation of W3C guidelines dictates that every CAPTCHA image is accompanied by an alternative text along the lines of “this picture contains the distorted sequence of letters X3JRQA.” Of course if we actually did that, spammers could cheat the puzzle, using automated software to learn the solution from the same hint.

The natural fallback was an audio CAPTCHA: instead of recognizing letters in a deliberately distorted image, users would be asked to recognize letters spoken out in a voice recording with deliberate noise added. Once again the trick is knowing exactly how to distort that soundtrack such that humans have an easy time while off-the-shelf voice recognition software stumbles. Once again, Microsoft Research to the rescue. Our colleagues knew that simply adding white-noise (aka Gaussian noise) would not do the trick. Voice recognition had become very good at tuning that out. Instead the difficulty of the audio CAPTCHA would rely on background “babble”— normal conversation sounds layered on top of the soundtrack at slightly lower volume. The perceptual challenge here is similar to carrying on a conversation in a loud space, focusing on the speaker in front of us while tuning out the cacophony of all the other voices echoing around the room.

As with visual CAPTCHAs, there were various knobs for adjusting the difficulty level of the puzzles. Chastened by the weak security configuration on the original rollout, this time more conservative choices were made. We recognized we were dealing with an example of the weakest-link effect: while honest users with accessibility needs are constrained to use the audio CAPTCHA, spammers have their choice of attacking either one. If either option is significantly easier to break, that is the one they are going to target. If it turns out that voice-recognition software could break the audio, it would not matter how good the original CAPTCHA was. All of the previous work optimizing visual CATPCHAs would be undermined as rational spammers shift over to breaking the audio to continue registering bogus accounts.

Fast forward to when the feature rolled out, that dreaded scenario did not come to pass. There was no spike in registrations coming through with audio puzzles. The initial version simply recreated the image puzzle in sound, but later iterations used distinct puzzles. This is important for determining in each case whether someone solved the image or audio version. But even when using the same puzzle, you would expect attackers requesting a large number of audio puzzles if they had an automated break, along with other signals such as a large number of “near misses” where the submitted solution is almost correct except for a letter or two. There was no such peak in the data. Collective sigh of relief all around.

Calibrating the difficulty

Except it turned out the design missed in the opposite direction this time. It is unclear if spammers even bothered attacking the audio CAPTCHA, much less whether they eventually gave up in frustration and violently chucked their copy of Dragon Naturally Speaking voice-recognition software across the room. There is little visibility into how the crooks operate. But one thing became clear over time: our audio CAPTCHA was also too difficult for honest users trying to sign up for accounts.

It’s not that anyone made a conscious decision to ship unsolvable puzzle. On the contrary, deliberate steps were taken to control difficulty. Sound-alike consonants such as “B” and “P” were excluded, since they were considered too difficult to distinguish. This is similar to the visual CAPTCHA avoiding symbols that look identical such as the digit “1” and letter “I,” or the letters “O” and “Q” which are particularly likely to morph into each other as random segments are being added around letters. The problem is all of these intuitions around what qualifies as “right” difficult level were never validated against actual users.

Widespread suspicion existed within the team that we were overdoing it on the difficulty scale. To anyone actually listening to sample audio clips, the letters were incomprehensible. Those of us raising that objection were met with a bit of folk-psychology wisdom: while the puzzles may sound incomprehensible to our untrained ears, users with visual disabilities are likely to have far more heightened sense of hearing. They would be just fine, this theory went: our subjective evaluation of difficulty is not an accurate gauge because we are not the target audience. That collective delusion may have persisted, were it not for a proper usability study conducted with real users.

Reality check

The wake-up moment occurred in the usability labs on MSFT Redmond-West (“Red-West”) campus. Our usability engineer helped recruited volunteers with specific accessibility requirements involving screen readers. These men and women were sat down in front of a computer to work through a scripted task as members of the Passport team stood helpless, observing behind one-way glass. To control for other accessibility issues that may exist in registration flows, the tasks focused on solving audio CAPTCHAs, stripping away every other extraneous action from the study. Volunteers were simply given dozens of audio CAPTCHA samples calibrated for different settings, some easier and some harder than what we had deployed in production.

After two days, the verdict was in: our audio CAPTCHAs were far more difficult than we realized. Even more instructive were the post-study debriefings. One user said he would likely have asked for help from a relative to complete registering for an account— the worst way to fail customers is making them feel they need help from other people in order to go about their business. Another volunteer wondered aloud if the person designing these audio CAPTCHAs was influenced by John Cage and other avant-garde composers. The folk-psychology theory was bunk: users with visual disabilities were just as frustrated trying to make sense of these these mangled audio as everyone else.

To be clear: this failure rests 100% with the Passport team— not our colleagues in MSFT Research who provided the basic building blocks. If anything, it was an exemplary case of “technology transfer” from research to product: MSR teams carried out innovative work pushing the envelope, problem, handed over working proof-of-concept code and educated the product team on choice of settings. It was our call setting the difficulty level high and our cavalier attitude towards usability that green-lighted a critical feature absent any empirical evidence of its accuracy, all the while patting ourselves on the back that accessibility requirements were satisfied. Mission accomplished Passport team!

In software engineering we rarely come face-to-face with our errors. Our customers are distant abstractions, caricaturized into helpful stereotypes by marketing: “Abby” is the home-user who prioritizes online safety, “Todd” owns a small-business and appreciates time-saving features while “Fred” the IT administrator is always looking to reduce technology costs. Yet we never get to hear directly from Abby, Fred or Todd on how well our work product actually helps them achieve those objectives. Success can be celebrated in metrics trending up— new registrations, logins per day and less commonly trending down— fewer password resets, less outbound spam originating from Hotmail. Failures are abstract, if not entirely out of sight. Usability studies are the one exception when rank-and-file engineers have an opportunity to meet these mythical “users” in the flesh and recognize beyond doubt when our work products have failed our customers.

CP

You must be logged in to post a comment.