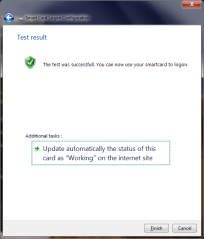

As earlier posts noted many Android devices in use have an NFC controller and embedded secure element (SE). That SE contains the same hardware internals as a traditional smart card, capable of performing similar security-sensitive functionality such as managing cryptographic keys. While Google Wallet is the canonical application leveraging the SE, for contactless payments, in principle other use cases such as authentication or data encryption can be implemented with appropriate code running on the SE. This is owing to the convenient property that in card emulation, the phone looks like a regular smart card to a standard PC, ready to transparently substitute for any scenario where traditional plastic cards were used. This property is easiest to demonstrate on Windows, but owing to the close fidelity of PC/SC API ports to OS X and Linux, it holds true for other popular operating systems as well. All of this brings up the question of what it would take to leverage this embedded SE hardware for additional scenarios such as logging into a Windows machine or encrypting a portable volume using Bitlocker-To-Go.

First a disclaimer: using the phone as a smart card does not require secure element involvement at all There is a notion of host-terminated card emulation: APDUs sent from another device over NFC are delivered to the Android OS for handling at the application processor, as opposed to routed to the SE and bypassing the main OS. This mode is not exposed out-of-the-box in Android even though the PN544 NFC controller used in most Android phones is perfectly capable of it. Compliments of an open source environment, a Cyanogen patch exists for enabling the functionality. Android Explorations blog has a neat demonstration of using that tweak to emulate a smart card running a simple PKI “applet” except this applet is implemented as a vanilla Android user-mode application that process APDUs originating from external peer.

The problem with this model is that sensitive data used by the emulated smart card application, such as cryptographic keys, are by definition accessible to Android OS. That makes these assets vulnerable to software vulnerabilities in a commodity operating system, as well as more subtle hardware risks such as side-channel leaks. While Android has a robust defense-in-depth model, the secure element has a smaller attack surface by virtue of its simpler functionality and built-in hardware tamper resistance.

With that caveat out-of-the-way, there are two notions of “using the secure element” on Android:

- Exchanging APDUs with SE, preferably from an Android application running on the same phone.

- Managing the contents of the SE. In particular provisioning code that implements new functionality such as authentication with public-key cryptography required for smart card logon.

Let’s start with the easy piece first. The embedded SE sports two interfaces. It can be accessed via contact or host interface from Android applications, as well as a contactless interface over NFC, used by external devices such as point-of-sale terminals or smart card readers. In principle applets running on the SE can detect which interface they are accessed from and discriminate based on that. Fortunately most management tasks including code installation can be done over the NFC interface using card emulation mode, without involving Android at all. That said it is often more convenient and natural to perform some actions (such as PIN entry) on the phone itself. That calls for an ordinary Android application to access the embedded SE over its contact interface.

Consistent with the Android security model, such access is strictly controlled by permissions granted to applications. Unlike other capabilities such as network access or making phone calls, this is not a discretionary permission that can be requested at install time subject to user approval. In Gingerbread the access model was based on signature of the calling application; more precisely only applications signed with the same key as the NFC stack. Starting with ICS a new model was introduced based on white-listing code signing certificates. There is an XML file on the system partition containing list of certificates. Any APK signed with one of these certificates is granted access to the “NFC execution environment,” a fancy term for referring to the embedded SE, and can send arbitrary APDUs to the any applet present on the SE. That includes the special Global Platform card manager, which is responsible for managing card contents and installing new code on the card.

CP

You must be logged in to post a comment.